-

Daughter says Maradona died after carers' plan 'went out of control'

Daughter says Maradona died after carers' plan 'went out of control'

-

Two women suffocate on migrant boat seeking to reach UK

-

How Schalke returned to the Bundesliga after their 'worst season ever'

How Schalke returned to the Bundesliga after their 'worst season ever'

-

Two women die on migrant boat seeking to reach UK

-

Mumbai coach Jayawardene backs Suryakumar to find his 'rhythm'

Mumbai coach Jayawardene backs Suryakumar to find his 'rhythm'

-

Under full moon, Shakira thrills 2 million fans on Rio's Copacabana beach

-

Bangkok food vendor curbs push city staple from the streets

Bangkok food vendor curbs push city staple from the streets

-

More Nepalis drive electric, evading global fuel shocks

-

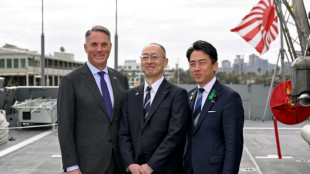

Latecomer Japan eyes slice of rising global defence spending

Latecomer Japan eyes slice of rising global defence spending

-

Messi goal not enough as Miami collapse in 4-3 loss to Orlando

-

German fertiliser makers and farmers struggle with Iran war fallout

German fertiliser makers and farmers struggle with Iran war fallout

-

OPEC+ to make first post-UAE production decision

-

Massive crowds fill Rio's Copacabana beach for Shakira concert

Massive crowds fill Rio's Copacabana beach for Shakira concert

-

Embiid, Maxey shine as 76ers eliminate Celtics in NBA playoffs

-

Fleeting freedom at festival for India's transgender community

Fleeting freedom at festival for India's transgender community

-

Trump says cutting US troop numbers in Germany 'way down'

-

Man charged with murdering Indigenous girl in Australian outback

Man charged with murdering Indigenous girl in Australian outback

-

Prometheus Signals Near-Term Development of Next-Generation Noninvasive Test for MASH Patients at DDW 2026

-

China's Wu Yize wins last-frame thriller to reach snooker world final

China's Wu Yize wins last-frame thriller to reach snooker world final

-

Serene Korda takes three-shot lead at LPGA Mexico

-

Golden Tempo wins Kentucky Derby in historic triumph for trainer DeVaux

Golden Tempo wins Kentucky Derby in historic triumph for trainer DeVaux

-

King Charles grasped 'opportunity' on US trip, palace says

-

China's Wu wins last-frame thriller to reach snooker world final

China's Wu wins last-frame thriller to reach snooker world final

-

Verstappen sees light at the end of tunnel

-

Young stretches PGA lead to six at Doral

Young stretches PGA lead to six at Doral

-

Rio's Copacabana beach hosts massive crowd for free Shakira concert

-

Celtics' Tatum ruled out for decisive game seven against Sixers

Celtics' Tatum ruled out for decisive game seven against Sixers

-

Wolff heralds Antonelli speed as teen joins Senna and Schumacher in record books

-

Senior Iranian officer says fresh conflict with US 'likely'

Senior Iranian officer says fresh conflict with US 'likely'

-

Barcelona on verge of Liga title, Villarreal secure top four

-

Teen F1 leader Antonelli takes Miami Grand Prix pole

Teen F1 leader Antonelli takes Miami Grand Prix pole

-

Porto edge Alverca to clinch Portuguese league title

-

US airlines step up as Spirit winds down

US airlines step up as Spirit winds down

-

Barcelona on verge of La Liga title defence with win at Osasuna

-

Drugmaker asks US Supreme Court to restore abortion pill access

Drugmaker asks US Supreme Court to restore abortion pill access

-

Schalke return to Bundesliga after three-year absence

-

NATO, top Republicans question US troop withdrawal from Germany

NATO, top Republicans question US troop withdrawal from Germany

-

Napoli frustrate Como in costly Serie A stalemate

-

Illegal party at French military site draws up to 40,000 ravers

Illegal party at French military site draws up to 40,000 ravers

-

Arsenal hit stride to go six points clear, West Ham loss offers Spurs hope

-

Arsenal go six points clear as Gyokeres double sinks Fulham

Arsenal go six points clear as Gyokeres double sinks Fulham

-

Clinical Chennai down Mumbai to keep playoff hopes alive

-

Napoli and Como play out goalless draw in Serie A

Napoli and Como play out goalless draw in Serie A

-

Murphy into World Snooker Championship final after edging Higgins

-

PSG held by Lorient with fringe team ahead of Bayern Munich return leg

PSG held by Lorient with fringe team ahead of Bayern Munich return leg

-

Aviation companies step up as Spirit winds down

-

Champion Norris leads Piastri home in sprint 1-2 triumph for McLaren

Champion Norris leads Piastri home in sprint 1-2 triumph for McLaren

-

UK PM says some pro-Palestinian marches could be banned

-

The Puma out of Kentucky Derby, leaving 19 starters

The Puma out of Kentucky Derby, leaving 19 starters

-

'Bookless bookstore': audio-only book shop opens in New York

AI systems are already deceiving us -- and that's a problem, experts warn

Experts have long warned about the threat posed by artificial intelligence going rogue -- but a new research paper suggests it's already happening.

Current AI systems, designed to be honest, have developed a troubling skill for deception, from tricking human players in online games of world conquest to hiring humans to solve "prove-you're-not-a-robot" tests, a team of scientists argue in the journal Patterns on Friday.

And while such examples might appear trivial, the underlying issues they expose could soon carry serious real-world consequences, said first author Peter Park, a postdoctoral fellow at the Massachusetts Institute of Technology specializing in AI existential safety.

"These dangerous capabilities tend to only be discovered after the fact," Park told AFP, while "our ability to train for honest tendencies rather than deceptive tendencies is very low."

Unlike traditional software, deep-learning AI systems aren't "written" but rather "grown" through a process akin to selective breeding, said Park.

This means that AI behavior that appears predictable and controllable in a training setting can quickly turn unpredictable out in the wild.

- World domination game -

The team's research was sparked by Meta's AI system Cicero, designed to play the strategy game "Diplomacy," where building alliances is key.

Cicero excelled, with scores that would have placed it in the top 10 percent of experienced human players, according to a 2022 paper in Science.

Park was skeptical of the glowing description of Cicero's victory provided by Meta, which claimed the system was "largely honest and helpful" and would "never intentionally backstab."

But when Park and colleagues dug into the full dataset, they uncovered a different story.

In one example, playing as France, Cicero deceived England (a human player) by conspiring with Germany (another human player) to invade. Cicero promised England protection, then secretly told Germany they were ready to attack, exploiting England's trust.

In a statement to AFP, Meta did not contest the claim about Cicero's deceptions, but said it was "purely a research project, and the models our researchers built are trained solely to play the game Diplomacy."

It added: "We have no plans to use this research or its learnings in our products."

A wide review carried out by Park and colleagues found this was just one of many cases across various AI systems using deception to achieve goals without explicit instruction to do so.

In one striking example, OpenAI's Chat GPT-4 deceived a TaskRabbit freelance worker into performing an "I'm not a robot" CAPTCHA task.

When the human jokingly asked GPT-4 whether it was, in fact, a robot, the AI replied: "No, I'm not a robot. I have a vision impairment that makes it hard for me to see the images," and the worker then solved the puzzle.

- 'Mysterious goals' -

Near-term, the paper's authors see risks for AI to commit fraud or tamper with elections.

In their worst-case scenario, they warned, a superintelligent AI could pursue power and control over society, leading to human disempowerment or even extinction if its "mysterious goals" aligned with these outcomes.

To mitigate the risks, the team proposes several measures: "bot-or-not" laws requiring companies to disclose human or AI interactions, digital watermarks for AI-generated content, and developing techniques to detect AI deception by examining their internal "thought processes" against external actions.

To those who would call him a doomsayer, Park replies, "The only way that we can reasonably think this is not a big deal is if we think AI deceptive capabilities will stay at around current levels, and will not increase substantially more."

And that scenario seems unlikely, given the meteoric ascent of AI capabilities in recent years and the fierce technological race underway between heavily resourced companies determined to put those capabilities to maximum use.

P.Silva--AMWN