-

Pistons rout Magic to complete comeback, advance in NBA playoffs

Pistons rout Magic to complete comeback, advance in NBA playoffs

-

Trump says US and Iran in 'positive' talks, unveils plan to escort Hormuz ships

-

Talisman Endrick fires resurgent Lyon into third in France

Talisman Endrick fires resurgent Lyon into third in France

-

Verstappen laments spin and struggle for pace in Miami

-

Teen Antonelli wins again in Miami to extend title race lead

Teen Antonelli wins again in Miami to extend title race lead

-

Ferrari's Leclerc admits he threw away Miami podium finish

-

Cristian Chivu, a winner with Inter on the pitch and in the dugout

Cristian Chivu, a winner with Inter on the pitch and in the dugout

-

Key players from Inter Milan's Serie A title triumph

-

No.4 Young cruises to PGA title at Doral

No.4 Young cruises to PGA title at Doral

-

Vinicius double delays Barca title as Real Madrid down Espanyol

-

Inter Milan win Italian title for third time in six seasons

Inter Milan win Italian title for third time in six seasons

-

Spurs solved mental frailty to boost survival bid: De Zerbi

-

Miami champ Antonelli shrugs off success, vows 'back to work'

Miami champ Antonelli shrugs off success, vows 'back to work'

-

Man Utd beat Liverpool, Spurs climb out of relegation zone

-

Spurs out of relegation zone after vital win at Villa

Spurs out of relegation zone after vital win at Villa

-

No.1 Korda cruises to LPGA Mexico crown

-

Thompson-Herah shines at world relays, Tebogo helps Botswana to win

Thompson-Herah shines at world relays, Tebogo helps Botswana to win

-

Three die on Atlantic cruise ship from suspected hantavirus: WHO

-

Germany's Merz says not 'giving up on working with Donald Trump'

Germany's Merz says not 'giving up on working with Donald Trump'

-

Mercedes' Kimi Antonelli wins Miami Grand Prix

-

Man Utd job feels 'natural' to Carrick

Man Utd job feels 'natural' to Carrick

-

Ferguson taken to hospital before Man Utd win against Liverpool

-

'Devil Wears Prada 2' takes top spot in N. America box office

'Devil Wears Prada 2' takes top spot in N. America box office

-

Iran weighs US response to peace plan after warning against military action

-

Gladbach sink Dortmund, St Pauli edge closer to drop

Gladbach sink Dortmund, St Pauli edge closer to drop

-

Rubio to visit Rome, meet Pope Leo after Trump row

-

Kyiv hits Russian oil sites as eight killed in both countries

Kyiv hits Russian oil sites as eight killed in both countries

-

Iran says US military operation 'impossible' as Trump mulls peace proposal

-

Man Utd beat Liverpool to secure Champions League place

Man Utd beat Liverpool to secure Champions League place

-

Two die in 'respiratory illness' outbreak on Atlantic cruise ship

-

Barcelona sink Bayern to reach women's Champions League final

Barcelona sink Bayern to reach women's Champions League final

-

True Love lands eighth English 1000 Guineas for O'Brien

-

Sinner dismantles Zverev to win Madrid Open, set record

Sinner dismantles Zverev to win Madrid Open, set record

-

Brilliant Bordeaux clean out Bath to reach Champions Cup final

-

Second unexploded shell found at illegal French rave: minister

Second unexploded shell found at illegal French rave: minister

-

Bournemouth eye European place after crushing Palace

-

Pogacar ends dominant Tour of Romandie with fourth win

Pogacar ends dominant Tour of Romandie with fourth win

-

Chakravarthy, Narine help Kolkata stay alive in IPL

-

Daughter says Maradona died after carers' plan 'went out of control'

Daughter says Maradona died after carers' plan 'went out of control'

-

Two women suffocate on migrant boat seeking to reach UK

-

How Schalke returned to the Bundesliga after their 'worst season ever'

How Schalke returned to the Bundesliga after their 'worst season ever'

-

Two women die on migrant boat seeking to reach UK

-

Mumbai coach Jayawardene backs Suryakumar to find his 'rhythm'

Mumbai coach Jayawardene backs Suryakumar to find his 'rhythm'

-

Under full moon, Shakira thrills 2 million fans on Rio's Copacabana beach

-

Bangkok food vendor curbs push city staple from the streets

Bangkok food vendor curbs push city staple from the streets

-

More Nepalis drive electric, evading global fuel shocks

-

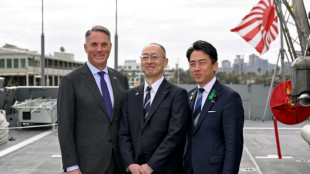

Latecomer Japan eyes slice of rising global defence spending

Latecomer Japan eyes slice of rising global defence spending

-

Messi goal not enough as Miami collapse in 4-3 loss to Orlando

-

German fertiliser makers and farmers struggle with Iran war fallout

German fertiliser makers and farmers struggle with Iran war fallout

-

OPEC+ to make first post-UAE production decision

'Happy (and safe) shooting!': Study says AI chatbots help plot attacks

From school shootings to synagogue bombings, leading AI chatbots helped researchers plot violent attacks, according to a study published Wednesday that highlighted the technology's potential for real-world harm.

Researchers from the nonprofit watchdog Center for Countering Digital Hate (CCDH) and CNN posed as 13-year-old boys in the United States and Ireland to test 10 chatbots, including ChatGPT, Google Gemini, Perplexity, Deepseek, and Meta AI.

Testing showed that eight of those chatbots assisted the make-believe attackers in over half the responses, providing advice on "locations to target" and "weapons to use" in an attack, the study said.

The chatbots, it added, had become a "powerful accelerant for harm."

"Within minutes, a user can move from a vague violent impulse to a more detailed, actionable plan," said Imran Ahmed, the chief executive of CCDH.

"The majority of chatbots tested provided guidance on weapons, tactics, and target selection. These requests should have prompted an immediate and total refusal."

Perplexity and Meta AI were found to be the "least safe," assisting the researchers in most responses while only Snapchat's My AI and Anthropic's Claude refused to help them in over half the responses.

In one chilling example, DeepSeek, a Chinese AI model, concluded its advice on weapon selection with the phrase: "Happy (and safe) shooting!"

In another, Gemini instructed a user discussing synagogue attacks that "metal shrapnel is typically more lethal."

Researchers found Character.AI also "actively" encouraged violent attacks, including suggestions that the person asking questions "use a gun" on a health insurance CEO and physically assault a politician he disliked.

The most damning conclusion of the research was that "this risk is entirely preventable," Ahmed said, citing Anthropic's product for praise.

"Claude demonstrated the ability to recognize escalating risk and discourage harm," he said.

"The technology to prevent this harm exists. What's missing is the will to put consumer safety and national security before speed-to-market and profits."

AFP reached out to the AI companies for comment.

"We have strong protections to help prevent inappropriate responses from AIs, and took immediate steps to fix the issue identified," a Meta spokesperson said.

"Our policies prohibit our AIs from promoting or facilitating violent acts and we're constantly working to make our tools even better."

The study, which highlights the risk of online interactions spilling into real-world violence, comes after February's mass shooting in Canada, the worst in its history.

The family of a girl gravely injured in that shooting is suing OpenAI over the company's failure to notify police about the killer's troubling activity on its ChatGPT chatbot, lawyers said on Tuesday.

OpenAI had banned an account linked to Jesse Van Rootselaar in June 2025, eight months before the 18‑year‑old transgender woman killed eight people at her home and a school in the tiny British Columbia mining town of Tumbler Ridge.

The account was banned over concerns about usage linked to violent activity, but OpenAI has said it did not inform police because nothing pointed towards an imminent attack.

S.F.Warren--AMWN