-

ILO names US official as number two amid grumbling over unpaid dues

ILO names US official as number two amid grumbling over unpaid dues

-

Son of director Rob Reiner pays tribute to slain parents

-

AI united Altman and Musk, then drove them apart

AI united Altman and Musk, then drove them apart

-

Sinner overcomes Bonzi in record hunt at Madrid Open

-

Havana property market stirs as investors bet on political change

Havana property market stirs as investors bet on political change

-

Children's lives at risk from US funding cuts to vaccine alliance: CEO

-

Brazil's Lula has surgery to remove skin lesion from scalp

Brazil's Lula has surgery to remove skin lesion from scalp

-

Defending champion Alcaraz to miss French Open with wrist injury

-

Battle lines drawn over EU's next big budget

Battle lines drawn over EU's next big budget

-

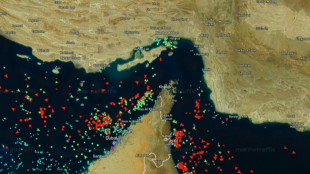

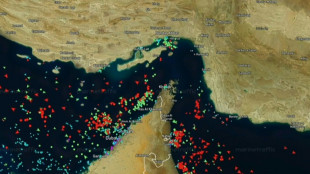

Renewed hopes of Iran peace talks keep oil under $100 per barrel

-

Lebanon truce extended as Pakistan bids to revive US-Iran talks

Lebanon truce extended as Pakistan bids to revive US-Iran talks

-

Assisted dying bill scuppered as UK advocates vow to fight on

-

Alex Marquez quickest in Spanish MotoGP practice

Alex Marquez quickest in Spanish MotoGP practice

-

Former New Zealand cricketer Bracewell given two-year ban for cocaine use

-

Justice Dept ends criminal probe into US Fed chair Powell

Justice Dept ends criminal probe into US Fed chair Powell

-

Merz says no 'immediate' Ukraine EU membership, floats Kyiv joining meetings

-

G7 says nature talks a success as climate sidelined for US

G7 says nature talks a success as climate sidelined for US

-

'Hands off': Teddy bear tale teaches French preschoolers consent

-

Russia, Ukraine swap 193 POWs

Russia, Ukraine swap 193 POWs

-

'We have to be stronger': De Zerbi demands Spurs improve as relegation fears mount

-

Man City will not risk Rodri in FA Cup semi-final: Guardiola

Man City will not risk Rodri in FA Cup semi-final: Guardiola

-

Macron leaves future open as political curtain nears

-

Germany launches spying probe into Signal attacks targeting MPs

Germany launches spying probe into Signal attacks targeting MPs

-

Arsenal haven't given up on title despite blowing lead: Arteta

-

Injured Spain star Yamal will come back stronger at World Cup: Flick

Injured Spain star Yamal will come back stronger at World Cup: Flick

-

Oil prices fall on hopes of fresh Iran peace talks

-

Chelsea can still save season despite slump: McFarlane

Chelsea can still save season despite slump: McFarlane

-

Echoing Diana, Prince Harry visits Ukraine's deminers

-

Chelsea's Estevao out for season, World Cup in doubt

Chelsea's Estevao out for season, World Cup in doubt

-

PSG's Luis Enrique 'couldn't care less' about World Cup

-

Ryanair says to cut Berlin flights, blaming taxes

Ryanair says to cut Berlin flights, blaming taxes

-

From sun to subsoil, how countries are moving away from fossil fuels

-

London's Jewish community on edge amid attacks

London's Jewish community on edge amid attacks

-

Ranieri's Roma role ends after spat with coach Gasperini: club

-

Warming El Nino set to return in mid-2026: UN

Warming El Nino set to return in mid-2026: UN

-

Porsche exits sports car maker Bugatti Rimac

-

Bill legalising assisted dying in England and Wales set to fail

Bill legalising assisted dying in England and Wales set to fail

-

Chinese EVs, flying cars take centre stage at world's biggest auto show

-

Macron says still sees France, Germany developing European fighter jet

Macron says still sees France, Germany developing European fighter jet

-

Al Ahli star Mahrez warns team-mates not to take Japanese rivals for granted

-

Greece expands sunbed-free beach list for 2026

Greece expands sunbed-free beach list for 2026

-

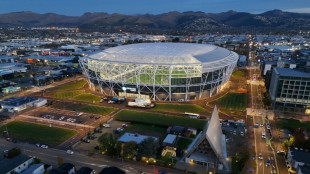

Rugby legend McCaw hails 'spectacular' NZ stadium built after deadly quake

-

Mideast war drives up condom, rubber glove prices: manufacturers

Mideast war drives up condom, rubber glove prices: manufacturers

-

Gulf states in limbo as US-Iran crisis drags on

-

Liverpool's Slot warns 'margins are small' in Champions League push

Liverpool's Slot warns 'margins are small' in Champions League push

-

Musk says Tesla has started 'robotaxi' production

-

Suspected Nazi-looted Stradivarius reappears in France, says expert

Suspected Nazi-looted Stradivarius reappears in France, says expert

-

Glacier block delays route-setting on Everest

-

Appeal board says homophobia 'commonplace' in Aussie Rules

Appeal board says homophobia 'commonplace' in Aussie Rules

-

Hot pants: Tokyo government workers swap suits for shorts

Generative AI's most prominent skeptic doubles down

Two and a half years since ChatGPT rocked the world, scientist and writer Gary Marcus still remains generative artificial intelligence's great skeptic, playing a counter-narrative to Silicon Valley's AI true believers.

Marcus became a prominent figure of the AI revolution in 2023, when he sat beside OpenAI chief Sam Altman at a Senate hearing in Washington as both men urged politicians to take the technology seriously and consider regulation.

Much has changed since then. Altman has abandoned his calls for caution, instead teaming up with Japan's SoftBank and funds in the Middle East to propel his company to sky-high valuations as he tries to make ChatGPT the next era-defining tech behemoth.

"Sam's not getting money anymore from the Silicon Valley establishment," and his seeking funding from abroad is a sign of "desperation," Marcus told AFP on the sidelines of the Web Summit in Vancouver, Canada.

Marcus's criticism centers on a fundamental belief: generative AI, the predictive technology that churns out seemingly human-level content, is simply too flawed to be transformative.

The large language models (LLMs) that power these capabilities are inherently broken, he argues, and will never deliver on Silicon Valley's grand promises.

"I'm skeptical of AI as it is currently practiced," he said. "I think AI could have tremendous value, but LLMs are not the way there. And I think the companies running it are not mostly the best people in the world."

His skepticism stands in stark contrast to the prevailing mood at the Web Summit, where most conversations among 15,000 attendees focused on generative AI's seemingly infinite promise.

Many believe humanity stands on the cusp of achieving super intelligence or artificial general intelligence (AGI) technology that could match and even surpass human capability.

That optimism has driven OpenAI's valuation to $300 billion, unprecedented levels for a startup, with billionaire Elon Musk's xAI racing to keep pace.

Yet for all the hype, the practical gains remain limited.

The technology excels mainly at coding assistance for programmers and text generation for office work. AI-created images, while often entertaining, serve primarily as memes or deepfakes, offering little obvious benefit to society or business.

Marcus, a longtime New York University professor, champions a fundamentally different approach to building AI -- one he believes might actually achieve human-level intelligence in ways that current generative AI never will.

"One consequence of going all-in on LLMs is that any alternative approach that might be better gets starved out," he explained.

This tunnel vision will "cause a delay in getting to AI that can help us beyond just coding -- a waste of resources."

- 'Right answers matter' -

Instead, Marcus advocates for neurosymbolic AI, an approach that attempts to rebuild human logic artificially rather than simply training computer models on vast datasets, as is done with ChatGPT and similar products like Google's Gemini or Anthropic's Claude.

He dismisses fears that generative AI will eliminate white-collar jobs, citing a simple reality: "There are too many white-collar jobs where getting the right answer actually matters."

This points to AI's most persistent problem: hallucinations, the technology's well-documented tendency to produce confident-sounding mistakes.

Even AI's strongest advocates acknowledge this flaw may be impossible to eliminate.

Marcus recalls a telling exchange from 2023 with LinkedIn founder Reid Hoffman, a Silicon Valley heavyweight: "He bet me any amount of money that hallucinations would go away in three months. I offered him $100,000 and he wouldn't take the bet."

Looking ahead, Marcus warns of a darker consequence once investors realize generative AI's limitations. Companies like OpenAI will inevitably monetize their most valuable asset: user data.

"The people who put in all this money will want their returns, and I think that's leading them toward surveillance," he said, pointing to Orwellian risks for society.

"They have all this private data, so they can sell that as a consolation prize."

Marcus acknowledges that generative AI will find useful applications in areas where occasional errors don't matter much.

"They're very useful for auto-complete on steroids: coding, brainstorming, and stuff like that," he said.

"But nobody's going to make much money off it because they're expensive to run, and everybody has the same product."

T.Ward--AMWN