-

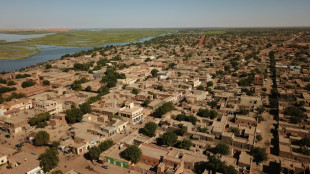

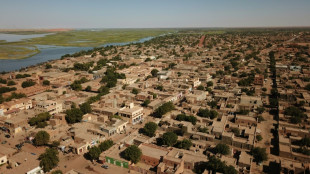

Gunfire in Mali as army battles 'terrorist groups'

Gunfire in Mali as army battles 'terrorist groups'

-

Gunfire rocks Mali districts, including junta stronghold: witnesses

-

Welsh football icon Ramsey takes on marathon challenge for charity

Welsh football icon Ramsey takes on marathon challenge for charity

-

Aussie Rules fires appeals chair over ruling on anti-gay slur

-

Lakers' OT win puts Rockets on brink of NBA playoff elimination

Lakers' OT win puts Rockets on brink of NBA playoff elimination

-

From radiation to invasion: a Chernobyl worker's two wars

-

AI firms flex lobbying muscle on both side of Atlantic

AI firms flex lobbying muscle on both side of Atlantic

-

First female Archbishop of Canterbury to meet Pope Leo

-

Hundreds of firefighters battle Japan forest blazes

Hundreds of firefighters battle Japan forest blazes

-

Lakers down Rockets in overtime for 3-0 series lead, Celtics hold off Sixers

-

US envoys heading to Pakistan for uncertain Iran talks

US envoys heading to Pakistan for uncertain Iran talks

-

'Hockey is religion': Montreal fans pack church for playoff push

-

Billionaire Elon Musk enters courtroom showdown with OpenAI

Billionaire Elon Musk enters courtroom showdown with OpenAI

-

Crunch nuclear proliferation meeting at UN amid raging global wars

-

Awkward debut for Trump at correspondents' dinner

Awkward debut for Trump at correspondents' dinner

-

Under blackout threat, Wikimedia reaches compromise with Indonesia

-

'Going to the moon': Irish footballers return to China 50 years after historic tour

'Going to the moon': Irish footballers return to China 50 years after historic tour

-

Spurs' Wembanyama ruled out of game 3 after concussion

-

Palestinians to vote in first elections since Gaza war

Palestinians to vote in first elections since Gaza war

-

Pragmatism, not patriotism, pushes young Lithuanians to military service

-

Group Seeking Court Order to Halt CMS Medicare THC Hemp Marijuana Program

Group Seeking Court Order to Halt CMS Medicare THC Hemp Marijuana Program

-

Peru confirms election runoff date, court says no to Lima re-vote

-

Venezuela, Colombia pledge military cooperation on first post-Maduro visit

Venezuela, Colombia pledge military cooperation on first post-Maduro visit

-

US hopes for progress, but Iran says not direct talks

-

Maine governor nixes data center moratorium in state

Maine governor nixes data center moratorium in state

-

Betis's Bellerin further dents Real Madrid title hopes

-

Lens rally but title bid fades after draw at Brest

Lens rally but title bid fades after draw at Brest

-

OpenAI CEO apologizes to Canada town for not reporting mass shooter

-

UK PM vows legislation to ban Iran Guards: report

UK PM vows legislation to ban Iran Guards: report

-

Leipzig tighten top-four grip as Union's Eta suffers second loss

-

Furyk named USA captain for 2027 Ryder Cup

Furyk named USA captain for 2027 Ryder Cup

-

EU, US sign critical minerals plan to counter China reliance

-

The 'housewives' did well -- Ukraine takes drone know-how abroad

The 'housewives' did well -- Ukraine takes drone know-how abroad

-

Court removes US businessman from managing his Brazilian football team

-

'Natural' birth control risks unwanted pregnancy, experts warn

'Natural' birth control risks unwanted pregnancy, experts warn

-

No.2 Korda boosts LPGA Chevron lead to seven

-

EU trade chief seeks 'positive traction' on US steel tariffs

EU trade chief seeks 'positive traction' on US steel tariffs

-

Anthropic says Google to pump $40 bn into AI startup

-

Kohli makes Gujarat pay as Bengaluru cruise to IPL win

Kohli makes Gujarat pay as Bengaluru cruise to IPL win

-

One injured in bomb attack on Colombia military base

-

Envoys from Iran, US expected in Pakistan for new talks

Envoys from Iran, US expected in Pakistan for new talks

-

ILO names US official as number two amid grumbling over unpaid dues

-

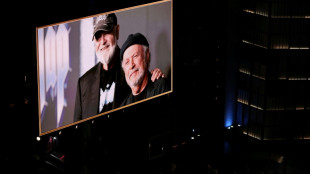

Son of director Rob Reiner pays tribute to slain parents

Son of director Rob Reiner pays tribute to slain parents

-

AI united Altman and Musk, then drove them apart

-

Sinner overcomes Bonzi in record hunt at Madrid Open

Sinner overcomes Bonzi in record hunt at Madrid Open

-

Havana property market stirs as investors bet on political change

-

Children's lives at risk from US funding cuts to vaccine alliance: CEO

Children's lives at risk from US funding cuts to vaccine alliance: CEO

-

Brazil's Lula has surgery to remove skin lesion from scalp

-

Defending champion Alcaraz to miss French Open with wrist injury

Defending champion Alcaraz to miss French Open with wrist injury

-

Battle lines drawn over EU's next big budget

AI's blind spot: tools fail to detect their own fakes

When outraged Filipinos turned to an AI-powered chatbot to verify a viral photograph of a lawmaker embroiled in a corruption scandal, the tool failed to detect it was fabricated -- even though it had generated the image itself.

Internet users are increasingly turning to chatbots to verify images in real time, but the tools often fail, raising questions about their visual debunking capabilities at a time when major tech platforms are scaling back human fact-checking.

In many cases, the tools wrongly identify images as real even when they are generated using the same generative models, further muddying an online information landscape awash with AI-generated fakes.

Among them is a fabricated image circulating on social media of Elizaldy Co, a former Philippine lawmaker charged by prosecutors in a multibillion-dollar flood-control corruption scam that sparked massive protests in the disaster-prone country.

The image of Co, whose whereabouts has been unknown since the official probe began, appeared to show him in Portugal.

When online sleuths tracking him asked Google's new AI mode whether the image was real, it incorrectly said it was authentic.

AFP's fact-checkers tracked down its creator and determined that the image was generated using Google AI.

"These models are trained primarily on language patterns and lack the specialized visual understanding needed to accurately identify AI-generated or manipulated imagery," Alon Yamin, chief executive of AI content detection platform Copyleaks, told AFP.

"With AI chatbots, even when an image originates from a similar generative model, the chatbot often provides inconsistent or overly generalized assessments, making them unreliable for tasks like fact-checking or verifying authenticity."

Google did not respond to AFP’s request for comment.

- 'Distinguishable from reality' -

AFP found similar examples of AI tools failing to verify their own creations.

During last month's deadly protests over lucrative benefits for senior officials in Pakistan-administered Kashmir, social media users shared a fabricated image purportedly showing men marching with flags and torches.

An AFP analysis found it was created using Google's Gemini AI model.

But Gemini and Microsoft's Copilot falsely identified it as a genuine image of the protest.

"This inability to correctly identify AI images stems from the fact that they (AI models) are programmed only to mimic well," Rossine Fallorina, from the nonprofit Sigla Research Center, told AFP.

"In a sense, they can only generate things to resemble. They cannot ascertain whether the resemblance is actually distinguishable from reality."

Earlier this year, Columbia University's Tow Center for Digital Journalism tested the ability of seven AI chatbots -- including ChatGPT, Perplexity, Grok, and Gemini -- to verify 10 images from photojournalists of news events.

All seven models failed to correctly identify the provenance of the photos, the study said.

- 'Shocked' -

AFP tracked down the source of Co's photo that garnered over a million views across social media -- a middle-aged web developer in the Philippines, who said he created it "for fun" using Nano Banana, Gemini's AI image generator.

"Sadly, a lot of people believed it," he told AFP, requesting anonymity to avoid a backlash.

"I edited my post -- and added 'AI generated' to stop the spread -- because I was shocked at how many shares it got."

Such cases show how AI-generated photos flooding social platforms can look virtually identical to real imagery.

The trend has fueled concerns as surveys show online users are increasingly shifting from traditional search engines to AI tools for information gathering and verifying information.

The shift comes as Meta announced earlier this year it was ending its third-party fact-checking program in the United States, turning over the task of debunking falsehoods to ordinary users under a model known as "Community Notes."

Human fact-checking has long been a flashpoint in hyperpolarized societies, where conservative advocates accuse professional fact-checkers of liberal bias, a charge they reject.

AFP currently works in 26 languages with Meta's fact-checking program, including in Asia, Latin America, and the European Union.

Researchers say AI models can be useful to professional fact-checkers, helping to quickly geolocate images and spot visual clues to establish authenticity. But they caution that they cannot replace the work of trained human fact-checkers.

"We can't rely on AI tools to combat AI in the long run," Fallorina said.

burs-ac/sla/sms

M.Thompson--AMWN